You have a quality management system. Perhaps an environmental management system. You know what it means to have a policy, assign responsibilities, and conduct audits. ISO 42001 is the same thing, applied to AI systems.

It is not a technical standard. It does not ask how your algorithms work. It asks who is responsible when something goes wrong, whether you know which AI tools are used in the organisation, and whether you have assessed your AI vendors in the same way you assess other suppliers.

If you already work with ISO 9001 or ISO 27001, the logic is familiar. What is new is what “it” refers to.

What ISO 42001 actually is

ISO/IEC 42001:2023 is an internationally certifiable standard for AI management systems. ISO published it in December 2023. It follows the same underlying structure as ISO 9001 and ISO 27001, with clauses 4–10 covering context, leadership, planning, support, operations, performance evaluation, and improvement.

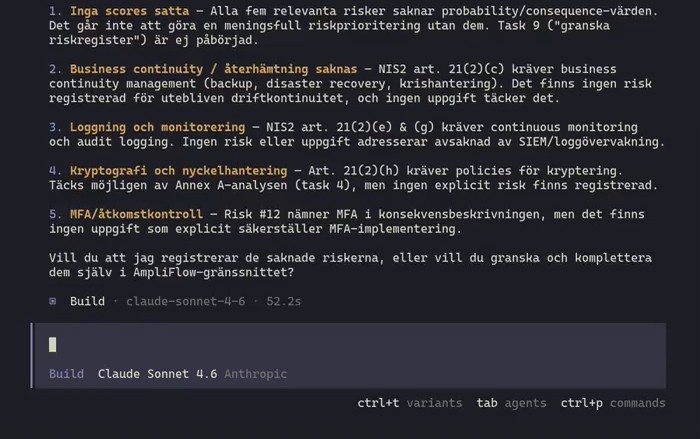

What makes the standard unique are three AI-specific requirements: AI risk assessment (6.1.2), risk treatment (6.1.3), and impact assessment (6.1.4). The last concerns how an AI system affects individuals and society, not only the organisation.

Then there is Annex A, with 38 controls across nine domains, from policy and data management to third-party relationships.

Why now?

Two things are happening at the same time.

The first is the EU AI Act. The regulation has applied since February 2025, and Article 4 requires that all organisations using AI systems ensure that affected staff have sufficient AI competence. Not just those who build AI. Everyone who uses it at work. That requirement is already in force.

On 2 August 2026, the rest of the regulation takes effect: requirements for risk management, documentation, and human oversight for high-risk systems. If your organisation uses AI to prioritise cases, analyse staff data, score customers, or make decisions that affect people, that may constitute a high-risk system. Having an organised approach to AI governance before that date is better than starting to build one when a regulator comes knocking.

The second is Shadow AI. Research suggests that 60 per cent of the AI tools used in businesses have been built or installed outside IT’s control.1 These are not trainees experimenting. These are managers who found a useful tool and started using it. The result: operational data passes through systems no one has assessed, with unclear accountability if something goes wrong.

Samsung has security engineers that most Swedish SMEs will never hire. In April 2023, several of them pasted confidential source code into ChatGPT. No malicious intent. They were debugging code in exactly the way the tool is designed for. The data went to OpenAI’s servers. Samsung’s response was not better training. It was to disable AI tools on all company devices.2

Competence does not protect you. Governance does.

The five controls: what they actually require

ISO 42001 is a voluntary standard; the EU AI Act is binding law. They are not the same thing. But the standard gives you a structured way to meet the requirements the law imposes, and a documented answer to the questions auditors and customers are starting to ask.

Annex A contains 38 controls. Five of them address directly what makes AI governance difficult in practice. Here is what they actually require, in the standard’s own language.

A.2.2: AI policy

Standard text: “The organization shall document a policy for the development or use of AI systems.”

In practice: you need a written policy stating which AI systems you approve for use, under what conditions, and what is required of employees who use them. It does not need to be a long document. It needs to be a clear position. Something like: “We use AI-assisted tools for X and Y. Customer data must not be entered into consumer versions of external AI services. Responsible: [name].”

A policy is primarily about clarity, not control. People want to know what the rules are.

A.3.2: Roles and responsibilities

Standard text: “Roles and responsibilities for AI shall be defined and allocated according to the needs of the organization.”

This is not an IT responsibility. It is an operational responsibility. Someone in your organisation needs to own the question: “we use AI systems, and if something goes wrong it is that person’s job to know what happened.” It does not need to be a new job title. It can be the quality manager. It can be the operations manager. But it must be someone.

The difference from how most organisations handle AI today: today, nobody owns the question.

A.5.2: Impact assessment

Standard text: “The organization shall establish a process to assess the potential consequences for individuals and societies that can result from the AI system throughout its life cycle.”

When do you need to conduct one? When a new AI system is introduced, when an existing system is used in a new way, or when the system influences decisions that directly affect people. It is not required for every tool. But for systems where an AI recommendation error results in an employee receiving incorrect pay, a customer being denied credit, or a case being deprioritised, you need a documented process to assess and manage that risk.

Think of it as a risk assessment, with a broader perspective than just the organisation’s own interests.

A.9.2: Responsible use

Standard text: “The organization shall define and document the processes for the responsible use of AI systems.”

What does this mean? You need clear processes covering which AI systems are approved for use in the organisation, who approves new tools, and how employees report problems. These are approval processes, not prohibition lists. The goal is to make it straightforward to use AI correctly, not to make it difficult to use AI at all.

Without documented processes, employees find their own solutions. With clear processes, they have a list to start from.

A.10.3: Supplier assessment

Standard text: “The organization shall establish a process to ensure that its usage of services, products or materials provided by suppliers aligns with the organization’s approach to the responsible development and use of AI systems.”

OpenAI and Anthropic are your suppliers when your employees use their tools with your data. It does not matter whether this is via an enterprise licence or a personal account. You need to assess them in the same way you assess other critical suppliers: what happens to our data, what certifications do they hold, what do they do with the information we send?

That is not bureaucracy. That is supplier management, exactly as you have always done it.

The relationship with ISO 27001

If you hold ISO 27001, you may wonder whether ISO 42001 duplicates the work. It does not.

ISO 27001 addresses information security: how do you protect your information from unauthorised access, loss, and manipulation. ISO 42001 addresses AI governance: how do you control how AI systems are used, who is responsible, and what their impact is.

An organisation can have excellent information security and still lack an answer to the question “who approves new AI tools?” These are different problems.

In practice they overlap on data management and supplier assessment. If you already have ISO 27001 processes for supplier evaluation, you can extend them to cover AI suppliers. If you have an information classification scheme, use it as the basis for deciding which data may be entered into external AI services.

ISO 42001 follows the same High Level Structure as all modern ISO management system standards. Already certified organisations build on what already exists.

Reasonable first steps

Three things will give you a solid foundation without needing to build a complete management system from day one.

Take an inventory of which AI systems you actually use. Not just those procured and approved by IT. Ask managers and team leads to report which tools they and their teams are using. You will find more than you expect. The result is not a list to prohibit, but a list to work with.

Write a simple AI policy. One page. What is permitted, what requires approval, what is prohibited. Communicate it. A policy that everyone knows about is worth more than a detailed document that nobody has read.

Appoint a responsible person. The quality manager. The operations manager. Somewhere. That person does not need to be an AI expert. They need to know that they are the point of contact when someone asks what the rules are, and that they keep the approved tools list up to date.

These three steps directly address what A.2.2, A.3.2, and A.9.2 require. They also give you a reasonable response if Article 4 of the EU AI Act were to be examined: you can demonstrate that you have taken an organised approach to AI use.

ISO 42001 certification is voluntary. The EU AI Act is not. But the two are connected: the standard gives you a way to show that you are working systematically on precisely the questions the law requires. Being able to answer “who is responsible for AI in your organisation” and “which AI systems are used with which data” will become standard to demonstrate to customers, partners, and auditors.

For background on why this question has become urgent: see the article Why are we paying for software that AI can build for free? for how Shadow AI has become an acute issue and how ISO 42001’s controls address it concretely. To see how AmpliFlow supports ISO 42001 implementation: book a call.