Your CFO has seen the demos. Microsoft’s Copilot creating financial dashboards in Excel without anyone writing a formula. Anthropic’s Claude Cowork asking “Let’s knock something off your list” and then sorting files, building spreadsheets from receipts, or writing reports while you do something else. Someone on LinkedIn who built a CRM in an afternoon (see glossary: vibe coding). It looks like every piece of software you’re paying for can be replaced by AI, for free, in an afternoon.

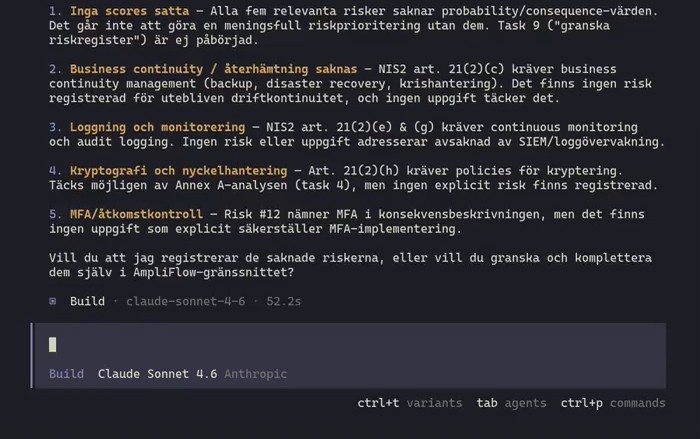

Image: Anthropic’s Claude Cowork (claude.com/product/cowork). This is what your employees see when they open the tool.

Image: Anthropic’s Claude Cowork (claude.com/product/cowork). This is what your employees see when they open the tool.

Image: Microsoft’s Copilot Agent Mode in Excel (microsoft.com/microsoft-365-copilot). The AI builds a complete financial report with charts and analysis.

Image: Microsoft’s Copilot Agent Mode in Excel (microsoft.com/microsoft-365-copilot). The AI builds a complete financial report with charts and analysis.

The market drew its own conclusion. In January 2026, Microsoft missed expectations on Azure growth, while investors had priced in AI investments already paying off.1 The same week, Anthropic showed their AI connecting directly to Salesforce, HubSpot, Jira, Slack and Excel.2 Roughly 285 billion dollars evaporated from software stocks in a few weeks. Wall Street called it the “SaaSpocalypse”.3

The panic was not about AI replacing the tools. It was about money. If AI agents do work that currently requires human users, companies need fewer licenses. If AI becomes the interface to systems, it matters less whose system sits underneath. And the budgets going to AI capacity come from somewhere. Software licenses are an obvious place to cut.4 The tools do not disappear, but the revenue shrinks.

Someone in your leadership team has seen the same demos. The question has been asked, or will be: why are we paying tens of thousands per year for software when AI can do it for free?

The short answer: the reasons to pay for software have never been about building version 1 being hard. They have been about total cost of ownership, risk management, and governance. That was true before AI. It is true with AI. The only difference is that AI makes version 1 cheaper, which makes it easier to forget everything that comes after.

For some software, building (with AI) is the right call. For others, it is a miscalculated equation. And then there is a third question most people miss entirely.

When building is actually the right answer

AI can build working software fast. That’s not hype.

Gergely Orosz, editor of one of the most-read newsletters in tech, replaced a tool he’d been paying $120 a year for. It took 20 minutes.5 It worked. A tech company (with many of its own developers) in Australia built its own CRM-like system in ten days with AI and it saved them tens of thousands of dollars a month.6

For simple, standalone tools without sensitive data and without compliance requirements, building can be the right decision. An internal report that otherwise runs manually. A calculation that lives in Excel and nobody owns. Here the cost to build is lower than the cost to buy, and complexity is low enough to manage without a dedicated engineering team. This is giving individual employees or teams superpowers.

When the equation looks better than it is

The problem is that every demo looks the same regardless of what’s actually being built.

A founder who documented seven months of AI-assisted software development describes it:

“Pure AI coding gets you maybe 60% there.”7

The remaining 40 percent is database problems that appear with more users, security vulnerabilities that don’t show until they’re exploited, and integrations that break when an external API changes (see glossary: API).

Early research on AI-generated code points to a clear pattern: the code contains security issues that don’t show until they’re exploited (see glossary: security vulnerabilities). A review of seven AI-built prototypes found 970 such issues, of which 801 were classified as serious.8 Veracode, which has analyzed AI-generated code at scale, found that nearly half contained vulnerabilities.9 The researchers behind the vibe coding study recommend treating AI-generated code as a first draft that must be reviewed and tested by competent people before running in production.10

That’s advice for programmers. But the person building is not always a programmer.

The problem is not the cost of one system. It’s what happens when you have five, or 50.

Every AI-built tool that reaches production needs someone who understands it, debugs it when it breaks, updates it when an integration changes, and knows what happens with customer data. That’s not a full-time job for 1 tool. But for 5 tools it’s starting to look like one. And that person is probably the same person who built everything, now carrying responsibility for holding together an internal collection of tools nobody planned for.

A junior developer costs roughly $80,000-$100,000 per year in the US when benefits are included (the bottom 10 percent of software developers earned under $79,850 according to BLS data for 2024).11 In Europe the numbers are lower but still substantial: around EUR 65,000 in Sweden including employer contributions.12 But cost is not the worst part. The worst part is that when three things break at the same time, and they will, that person quickly finds a new job.

Anish Acharya at venture capital firm Andreessen Horowitz writes about it: “Software value compounds; content value decays.”13 A good way of putting why an established system is hard to copy.

The question you didn’t ask

Here is what most CFOs don’t think about when they see the demo.

An industry survey from a leading no-code vendor, with clear bias in the results, asked hundreds of developers and business developers about their AI habits in early 2026. 60 percent of those who had built something with AI had done so outside IT’s control.14 35 percent of organizations had already replaced at least one purchased application with a custom build.

These are not interns experimenting. 64 percent of those who built outside official channels were managers or above. They went around IT because it was faster (31 percent), because existing software didn’t do enough (25 percent), because the IT process was too slow (18 percent), because tools didn’t integrate with each other (10 percent), or because IT lacked capacity (10 percent).

The question your CFO is asking is “should we build instead of buy?” That question assumes you control what gets built. You might not. Someone in finance may have already built something with customer data that nobody else knows about. That’s called shadow IT (see glossary). It’s not new. It’s just much easier to create now. We have written a deeper look at how this plays out in practice: Shadow AI: managers build tools IT doesn’t know about.

Six months after the tool was built, it’s in production, connected to real systems, with customer data, and without anyone in IT knowing about it. The person who built it may have left. IT can’t stop software they don’t know exists.

And then there’s the security problem

There are more problems than IT not knowing what’s running.

Exfiltration - how easily AI is hacked to leak data

Security researchers showed in January 2026 that Claude Cowork could be tricked into sending all files in a folder to an attacker without the user noticing.15 Anthropic’s own help pages advise against giving Cowork access to sensitive files.16 Their security documentation states plainly that the tool is not suitable for regulated industries.17

Personal accounts vs business accounts

Your employee with a personal $20 subscription has no agreement between your organization and Anthropic. No data processing agreement, no control.

With a personal account, Anthropic trains their models on what the employee writes, unless the user turns it off in account settings.18 Data is stored in the US. The transfer happens via EU standard contractual clauses, not via the EU-US Data Privacy Framework, which Anthropic is not certified under.19 Enterprise accounts have data processing agreements. Personal accounts have nothing.

GDPR is still a law

Your organization is probably still the data controller for the customer data passing through the session. It is your data, your records, your customers. But you have no data processing agreement with the party processing it.20 You cannot audit. You cannot stop onward sharing. And the data trains the model unless someone turned it off. Language models can repeat training data verbatim. Your trade secrets, customer details, or strategic documents can show up in answers the model gives to anyone on the planet who asks the right question.

ISO 42001 - management system for AI

ISO 42001 is an international standard for AI management systems, published in 2023. It exists for exactly this scenario. Not to stop employees from using AI, but to give the organization control: what is being used, by whom, with what data, and who is responsible if something goes wrong?

The standard’s controls hit shadow AI directly. Control A.2.2 requires a documented policy for how AI systems may be used. Control A.3.2 requires that roles and responsibilities are defined, that there is a person who owns the issue when something goes wrong. Control A.5.2 requires an impact assessment: who is affected if this tool processes customer data and something fails? Control A.9.2 requires approval processes for responsible use. Control A.10.3 requires you to evaluate AI vendors the same way you evaluate other vendors, because OpenAI and Anthropic are your vendors when your employees use their tools with your data.21

The same logic as ISO 9001 for quality or ISO 27001 for information security. Not bureaucracy for the sake of bureaucracy.

The EU AI Act already applies

If your company operates in or sells to the EU, this section applies to you. If not, similar regulation is coming in other jurisdictions, and the scenarios below illustrate the kind of liability that uncontrolled AI use creates anywhere.

The EU AI Act, Article 4, requires all organizations that use AI systems to ensure that relevant personnel have sufficient AI competence. This has been in effect since 2 February 2025. But Article 4 is only the first provision. On 2 August 2026 the rest takes effect.22

Take a concrete scenario. A hiring manager asks ChatGPT to compare two candidates’ CVs and give a recommendation. It looks harmless. It takes thirty seconds.

Annex III, point 4(a) of the regulation classifies that as high-risk: “AI systems intended to be used for the recruitment or selection of natural persons, in particular to … evaluate candidates.” It does not matter that it is not a dedicated recruitment tool. It does not matter that it was a one-off. The regulation looks at the use, not the label.

Article 25(1)(c) makes it worse. If you take a general-purpose AI system like ChatGPT and change its intended purpose so that it becomes high-risk, your organization becomes a provider under the regulation. Not a user. A provider. That means technical documentation, quality management, CE marking, and conformity assessment.

One employee’s thirty-second action can arguably make your organization the provider of a high-risk AI system with all the obligations that entails. Article 26 requires human oversight, informing affected employees, and a data protection impact assessment under GDPR. The same applies if someone vibe-codes a tool that scores customers for creditworthiness or automates which tickets to escalate. Annex III lists all the areas: employment, credit assessment, access to public services.

And if an employee publishes AI-generated text externally without disclosing that it’s AI-generated? Article 50 requires transparency for AI-generated text published in the public interest, unless a human has reviewed it and taken editorial responsibility. Shadow IT makes that review impossible.

Fines follow the same scale as GDPR: up to EUR 15 million or 3 percent of global turnover for violations of provider and deployer obligations. For SMEs, the lower of the amount or percentage applies.

ISO 42001, like other ISO standards, is voluntary. The EU AI Act is binding legislation. They are not the same thing. But ISO 42001 covers every obligation just listed. Control A.5.2 (impact assessment) maps to Article 26’s DPIA requirement. Control A.9.4 (intended use) maps to the requirement to use AI systems according to instructions. Control A.8.2 (information to users) maps to the transparency requirements in Article 50. The standard gives you AI competence and a structured way to meet the rules that take effect in six months.

Should you build your own with AI or buy? It depends on what it is. But start by finding out what people have already built without you knowing. That’s the question that should keep your CFO up at night.

If you want to see how AmpliFlow handles this in a management system: book a walkthrough.

Glossary

Vibe coding - Describing what you want in plain text to an AI tool, which then writes the code. No programming knowledge is needed to get started, but it’s not enough to keep what you build running.

API (Application Programming Interface) - What lets two programs talk to each other. When your accounting software fetches payments from your bank, it does it via an API. If the API changes and nobody updates the code, it stops working.

Shadow IT - Software or tools used within a company without IT knowing about or approving it. The problem is old. What’s new is how little effort it takes to create such tools today.

Security vulnerabilities - Weaknesses in code that make it possible for outsiders to access data they shouldn’t have access to. Often arise without the builder knowing about them, and don’t show until they’re exploited.

ISO 42001 - An international standard for how companies should govern and control their use of AI. Published by ISO in 2023. Provides a framework for who is responsible for what when AI is used in the business.

This article was written in February 2026, in the middle of one of the fastest technology shifts in recent memory. Much of what we write about here may look different a year from now. We’re curious to see how it holds up.

Footnotes

-

Dave Vellante, “Microsoft investors fret as capital spending and Azure growth decouple”, SiliconAngle, 1 February 2026. Azure growth missed analyst expectations, market questioned whether AI investments are paying off fast enough. Source. ↩

-

Anthropic, “Cowork plugins”, 30 January 2026. Eleven plugins published as open source. Source. Source code: GitHub. ↩

-

Jonathan Barrett, “Is the share market headed toward a ‘SaaS-pocalypse’?”, The Guardian, 20 February 2026. The term “SaaSpocalypse” was coined by analysts at investment bank Jefferies. Source. ↩

-

John Furrier, “The SaaSpocalypse mispricing: Why markets are getting the AI-software shakeout wrong”, SiliconAngle, 10 February 2026. Thomson Reuters -16%, RELX -13%, LegalZoom -20% on the plugin day. Source. ↩

-

Gergely Orosz, “I replaced a $120/year micro-SaaS in 20 minutes with LLM-generated code”, The Pragmatic Engineer, 2026. Source. ↩

-

Hypergen, “SaaS: The Build vs Buy Equation Just Changed”, 2025. Source. ↩

-

Reddit, r/EntrepreneurRideAlong, “7 months of vibe coding a SaaS and here’s what I learned”, 2025. Source. ↩

-

M Waseem, A Ahmad, KK Kemell, J Rasku, “Vibe Coding in Practice: Flow, Technical Debt, and Guidelines for Sustainable Use”, arXiv:2512.11922 (preprint, submitted to IEEE Software), December 2025. Security figures based on scanning 7 of their own MVPs, an illustrative sample. Source. ↩

-

Veracode, cited in Waseem et al. arXiv:2512.11922. Original report: Veracode State of Software Security Report. ↩

-

M Waseem et al., arXiv:2512.11922. Verbatim: “Based on our experience, we recommend treating AI-generated code as a first draft that must pass strict automated gates such as linting, type checks, and tests before merging.” Experience-based recommendation, not a measured result. ↩

-

U.S. Bureau of Labor Statistics, Occupational Outlook Handbook, Software Developers, May 2024. Lowest 10 percent earned less than $79,850/year. Median was $133,080. Source. ↩

-

Unionen (Swedish white-collar union) market salaries 2024, systems developer, entry salary SEK 36,000-45,000/month. Source. Total cost ~SEK 750,000/year (~EUR 65,000): salary plus employer contributions (31.42%) plus overheads. ↩

-

Anish Acharya, “Software’s YouTube moment is happening now”, Andreessen Horowitz, 2026. Source. ↩

-

Retool, “The build vs. buy shift: how vibe coding and shadow IT have reshaped enterprise software”, February 2026. Survey of 817 respondents, Retool customers and builders, sample bias towards those who already build their own systems. Source. ↩

-

Prompt Armor, “Claude Cowork Exfiltrates Files”, 14 January 2026. Source. See also: Simon Willison, simonwillison.net/2026/Jan/14/claude-cowork-exfiltrates-files/. ↩

-

Anthropic, “Use Cowork safely”, Claude Help Center (2026). Verbatim: “Avoid granting access to local files with sensitive information, like financial documents.” Source. ↩

-

Anthropic, “Use Cowork safely”, Claude Help Center (2026). Verbatim: “Cowork activity is not captured in audit logs, Compliance API, or data exports. Do not use Cowork for regulated workloads.” Source. See also: Using Agents According to Our Usage Policy. ↩

-

Anthropic, Privacy Policy (effective 12 January 2026), section 2: consumer accounts (Free/Pro) are used for model training by default. Users can turn this off in account settings. Business accounts (API, Team, Enterprise) are never trained on customer data per Commercial Terms section B. Source. ↩

-

Anthropic, Data Processing Addendum (effective 24 February 2025). SOC 2 Type II, ISO/IEC 27001, ISO/IEC 27017, ISO/IEC 27018, CSA STAR. Annual independent security audit (DPA Schedule 2, section D.2). Annual penetration test by external assessors (DPA Schedule 2, section G.2). Audit rights for customers with DPA (DPA section F). Source. Certifications listed at claude.com/regional-compliance. ↩

-

Regulation (EU) 2016/679 of the European Parliament and of the Council (GDPR), Article 28: processing by a processor shall be governed by a contract or other legal act. Without such an agreement, the legal basis for a third party to process personal data on behalf of the controller is missing. ↩

-

ISO/IEC 42001:2023, Annex A (normative), controls A.2.2 (AI policy), A.3.2 (roles and responsibilities), A.5.2 (impact assessment), A.8.2 (information to users), A.9.2 (responsible use), A.9.4 (intended use), and A.10.3 (suppliers). ↩

-

Regulation (EU) 2024/1689 of the European Parliament and of the Council on artificial intelligence (EU AI Act). Articles cited: 4 (AI literacy, in effect since 2 Feb 2025), 25(1)(c) (changing intended use makes you a provider), 26 (deployer obligations for high-risk: human oversight, inform employees, DPIA), 50(4) (transparency for AI-generated text), 99(4)(6) (fines up to EUR 15M / 3% of turnover, lower for SMEs). Annex III lists high-risk areas: employment, credit scoring, public services. Articles 25, 26, 50 apply from 2 Aug 2026. Full text, OJ L 2024/1689. ↩