In February 2026, software companies like Salesforce and Intuit fell up to 30 percent on Nasdaq.1 Wall Street called it the “SaaSpocalypse.” Companies like Salesforce, Adobe, Intuit, and ServiceNow dropped 25-30 percent in a matter of weeks.2 The market decided that AI agents will soon do the work that currently requires human users, and that means fewer licenses.

Maybe it’s right. Yale Budget Lab’s research on AI and the labor market shows that after three years of intense AI hype, researchers have been unable to measure any significant impact on employment.3 Occupations with high AI exposure don’t have higher unemployment.

At the same time, we hear IT managers describe agents built in Microsoft Copilot to compile audit preparation materials and similar tasks.4 And in software development, tools with agents like

So what is it? Hype or reality? Probably both.

Where things actually stand

We reviewed 14 vendors6 of management system and

The activity is there. But an agent generating a draft is one thing. An agent taking responsibility for an entire process, registering a deviation, assessing consequences, linking to the right process, initiating a corrective action, and following up, that’s something else entirely.

And it’s not just a technical challenge. There are good reasons to be careful.

Security risks. Agents with system access can exfiltrate data, follow injected instructions in documents they read (prompt injection), and do essentially anything on your machine.

Reports of agents leaking information or being manipulated by malicious input grow week by week. In a management system where records drive how the organization operates and stored data is often sensitive, the consequences are high.

The productivity gain might not be what it looks like. The agent does the job in 42 seconds instead of a full day. But was the result correct? Does the review take as long as doing it manually? We’ve written about this question before based on Yale research: after three years of AI hype, researchers haven’t seen measurable impact on the labor market. That should give us all pause.

What we built

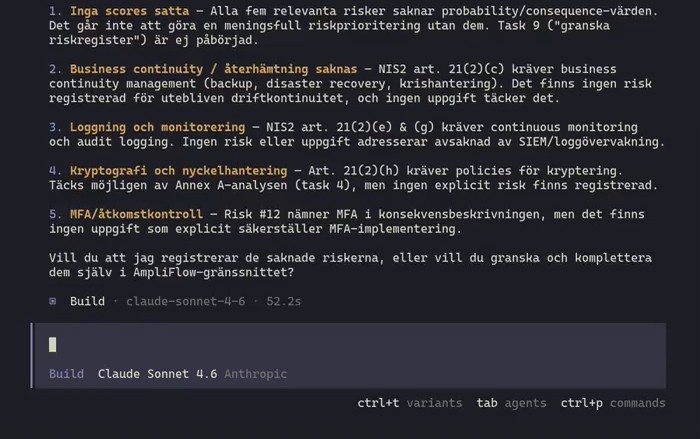

Talk about agents is cheap. Here’s 42 seconds of video showing an agent reading 9 tasks and 5 existing risks, comparing against NIS2 requirements, finding 5 gaps, and registering them directly in AmpliFlow:

It’s af-cli

af-cli

It’s a fully functional Labs product. The binary is on GitHub and you can download and run it. But we don’t recommend it as anything other than research. It exists because we want to understand what’s possible, not because we think everyone should replace their workflows tomorrow.

And if you want to register deviations by clicking in AmpliFlow, you do that. Same data, same system.

What we don’t know

It might turn out that agents in management systems are as transformative as the internet was for office work. It might turn out to be the emperor’s new clothes.

We have a working product and a demo showing what’s technically possible. We also have Yale research showing that three years of hype haven’t produced measurable impact, and a growing list of security incidents where agents running unchecked are the culprit.

If you already let employees use AI tools (ChatGPT, Copilot, Claude), you have a governance need that’s growing.

We’re testing, carefully, and sharing what we find. Here’s what it looks like right now.

Footnotes

-

a16z, “Good news: AI Will Eat Application Software,” March 2026: “Since the start of 2026, ETFs for public software companies have fallen by 30 percent, erasing all the gains since the launch of ChatGPT.” https://a16z.com/good-news-ai-will-eat-application-software/ ↩

-

a16z, same source: “Companies like Salesforce, Adobe, Intuit, ServiceNow, and Veeva…are down 25 to 30 percent in a matter of weeks.” ↩

-

Yale Budget Lab, “Evaluating the Impact of AI on the Labor Market,” 2025: “The broader labor market has not experienced a discernible disruption since ChatGPT’s launch.” Goldman Sachs reference on AI investment from the same report. https://budgetlab.yale.edu/research/evaluating-impact-ai-labor-market-current-state-affairs ↩

-

Microsoft, Ignite 2024, November 2024: “Nearly 70% of the Fortune 500 now use Microsoft 365 Copilot.” https://blogs.microsoft.com/blog/2024/11/19/ignite-2024-why-nearly-70-of-the-fortune-500-now-use-microsoft-365-copilot/ ↩

-

a16z, “The Top 100 Gen AI Consumer Apps, 6th Edition,” March 2026. https://a16z.com/100-gen-ai-apps-6/ ↩

-

Own research, March 2026. Vendors reviewed: ServiceNow, SAP, MasterControl, Stratsys, Onspring, LogicGate, ETQ/Hexagon, Qualio, Greenlight Guru, Diligent, Archer, MetricStream, Clarendo, and Centuri. ↩

-

ServiceNow, “AI Agents.” Offers “AI Agent Orchestrator,” “Agent Studio,” and “Autonomous Workforce” for IT/security operations. https://www.servicenow.com/products/ai-agents.html ↩

-

SAP, “AI Agents.” “Joule Agents” for finance, procurement, supply chain, HR. https://www.sap.com/products/artificial-intelligence/ai-agents.html ↩

-

MasterControl, “AI for Life Sciences, Quality & Manufacturing.” Describes “Secure agentic AI platform” but every tool requires human initiation and approval. https://www.mastercontrol.com/ai-for-life-sciences-quality-manufacturing/ ↩

-

Stratsys, “Stratsys AI.” Mentions “AI agents” but capabilities are summarization, suggestions, and analysis. https://www.stratsys.com/sv/stratsys-ai ↩

-

Regulation (EU) 2024/1689 (AI Act). Article 50 requires transparency for AI-generated content. ↩

-

a16z, same source: “In January 2026, an open-source project called OpenClaw went from a solo developer’s side project to 68,000 GitHub stars…became the most-starred project on Github…acquired by OpenAI in February 2026.” ↩