Your employees are already using AI Do you control the tools, the data, and the ownership?

ChatGPT, Copilot, and built-in AI features already show up in daily work across small and mid-sized companies. The questions arrive immediately: which tools are approved, what data may be used, who owns the risks, and what happens when something goes wrong? ISO 42001 gives you the structure. AmpliFlow turns it into a digital AI management system that becomes part of daily work.

AmpliFlow participated in ISO's working group for ISO/IEC 42001.

What AI governance looks like in practice for an SMB

The point is not to write an AI policy and hope for the best. The point is to create a working method that makes AI use safer, traceable, and easy to follow up in daily work.

Approved tools and clear rules

Collect which AI tools may be used, for what purpose, and with what data. Staff stop guessing, managers stop chasing answers afterward.

Ownership, risks, and incidents in the same flow

Link each AI system to owners, risks, impact assessments, and actions. When something goes wrong, you already have a traceable way to report, investigate, and follow up.

Clear answers for customers, auditors, and management

When someone asks how you govern AI, you can show more than good intentions. You can show policy, training, risk work, decisions, and follow-up in one place.

Start with the platform, without building everything yourselves

Mini fits teams that want to drive more of the AI management system themselves, but not start in an empty system. We set up the environment, base templates, control structure, and AI support so you can move quickly from unclear AI use to a working method people actually follow.

Where do you stand against ISO 42001 today?

Get a clear picture of your AI governance maturity -- free and without obligation. Answer 12 questions based on ISO 42001 clauses and get concrete recommendations on what needs strengthening.

Gap Analysis: Where do you stand against ISO 42001?

Answer 12 questions based on ISO 42001 clauses (context, leadership, planning, support, operation, evaluation, improvement) and get an assessment of your AI governance maturity -- plus concrete next steps.

AI becomes a management problem long before it becomes a certification project

For SMB teams, ISO 42001 starts with getting daily work under control, not with adding another policy binder.

No one knows which tools are actually in use

AI use often starts in small ways. Someone summarizes meetings, someone drafts customer emails, someone tests code or proposals. Without a shared structure it becomes unclear which tools are approved, what data may be used, and who owns the decisions.

Customer and procurement questions get hard to answer

When customers, parent companies, or larger buyers ask how you govern AI, it is not enough to say you are careful. They want to see policy, ownership, risk assessment, training, and follow-up that hang together.

Small mistakes become real business problems

Wrong AI output, customer data in the wrong tool, or unclear ownership lines are not technical footnotes. They become support issues, contract risk, GDPR questions, and lost trust. ISO 42001 helps you build a working method before that gets expensive.

What you say vs what the auditor looks for

There's a difference between having an AI management system and living it. Here's what the auditor actually checks:

From a control problem to working AI governance

AmpliFlow brings AI policy, approved tools, risk assessments, impact assessments, actions, and follow-up into one system. When you later want to work toward ISO 42001, the Annex controls, SoA support, and task flow are already there.

AI-driven risk analysis

AmpliFlow's AI generates risk scenarios and consequence analyses automatically. Combine it with ORA to assess AI risks according to ISO 42001 (6.1.2).

Impact assessment for AI systems

Document how your AI systems affect individuals and society. A unique requirement in ISO 42001 (6.1.4, A.5).

Statement of Applicability (SoA)

The SoA is generated automatically based on your applicability decisions per control. Justify included and excluded controls directly in the control register (6.1.3e).

Legislation monitoring for EU AI Act

Add EU AI Act to the legislation registry. Set target dates for when you need adaptations complete.

Process maps for AI-affected processes

Document which processes use AI and how. Link AI tools to process steps.

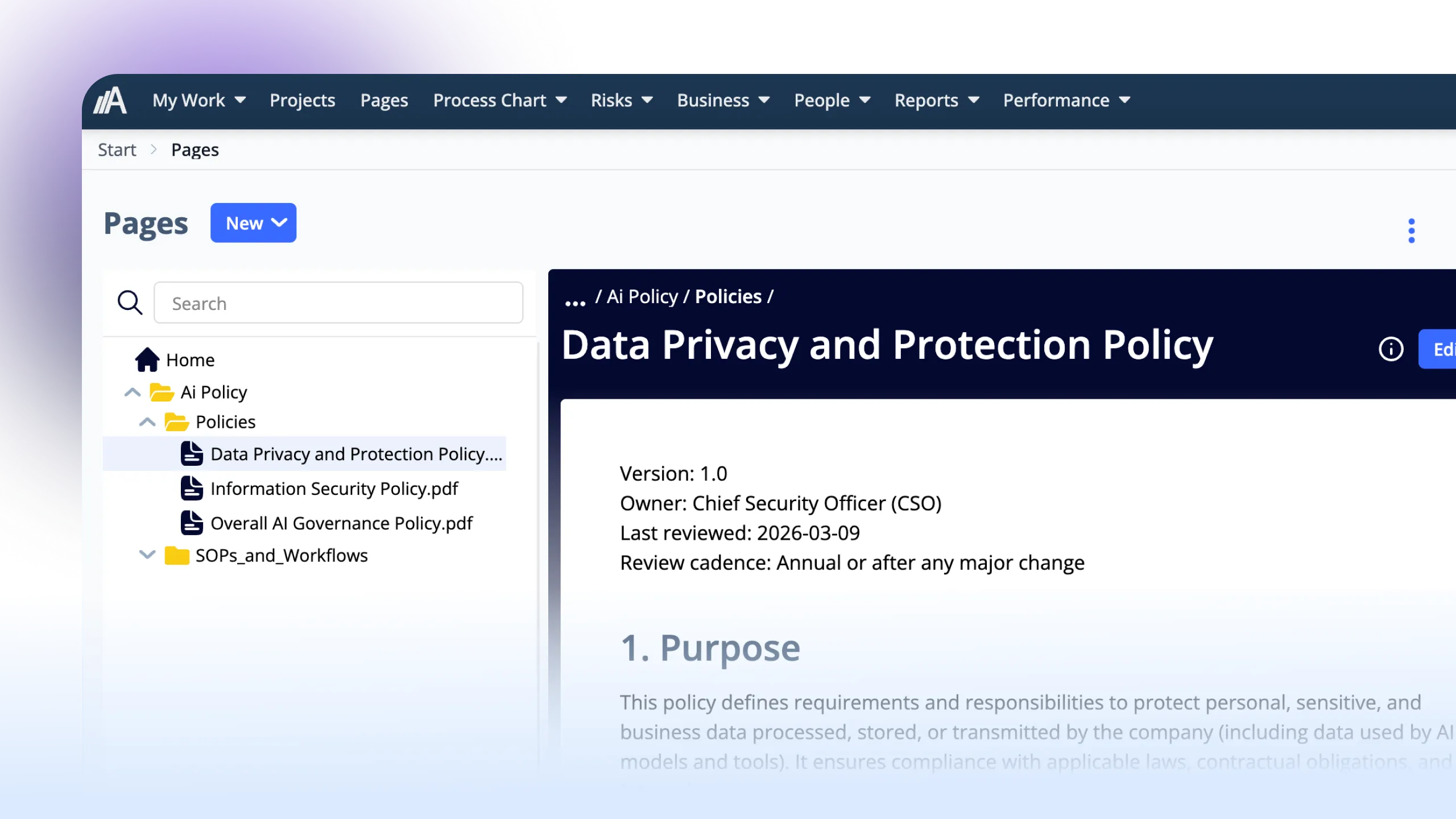

AI policies via Pages

Create AI policies in the built-in text editor (wiki). Organize in folders and share with employees.

Stakeholder analysis with ISO 42001 linkage

Map stakeholders affected by your AI usage and their requirements.

Action management for AI initiatives

AmpliFlow's AI suggests actions and improvements. Follow up with owners and deadlines.

Responsible AI in practice

ISO 42001 is built on principles for responsible AI. AmpliFlow helps you translate principles into concrete actions.

Transparency

Explain how AI systems work and make decisions

Fairness

Avoid bias and ensure equal treatment

Accountability

Clear ownership and responsibility for AI decisions

Safety

Protect against misuse and unintended harm

Prepare for the EU AI Regulation

The EU AI Act is the world's first comprehensive AI legislation. ISO 42001 helps you meet the requirements.

Risk classification

Classify your AI systems according to EU risk levels: unacceptable, high, limited or minimal risk.

Documentation requirements

Meet requirements for technical documentation, user instructions and quality management.

Compliance evidence

Show supervisory authorities that you're in control. ISO 42001 certification provides credible evidence.

Timeline

The EU AI Act enters into force gradually: prohibited AI systems since February 2025, requirements for GPAI models from August 2025, and high-risk systems from August 2026. Fines up to 35 million euros or 7 percent of global turnover. Note: ISO 42001 is not a harmonized standard under the AI Act. It does not provide a presumption of conformity, but it provides a proven structure that supports compliance.

Classify your AI system

Answer three questions about your AI system and see which risk level it falls under according to the EU AI Act.

Question 1 of 3

What is the AI system used for?

From AI system to safe use

ISO 42001 requires risk-based thinking for AI. AmpliFlow makes it concrete.

What changes in daily work?

ISO 42001 is not about writing documents nobody reads. It's about concrete changes in how you work with AI:

From "everyone does what they want" to approved tools

A clear list of which AI tools may be used, with what data, and who is responsible. Employees know what applies without having to ask.

AI training from day one

New employees get an introduction to the organization's AI policy and tools. Not a PowerPoint, a practical walkthrough of what's allowed and why.

AI incidents are reported and investigated

When something goes wrong with an AI system there is a process: report, investigate, act, learn. Just like quality deviations, but adapted for AI-specific risks.

Regular follow-up

Quarterly review of AI usage, incidents and risks. Management makes decisions based on facts, not assumptions.

From start to AI governance

A realistic timeline for ISO 42001 implementation. Duration varies based on organization size and AI maturity.

AI inventory

1-2 wMap all AI systems and use cases in the organization

Gap analysis

1-2 wCompare current governance against ISO 42001 requirements

Risk assessment

2-3 wAssess risks for each AI system according to EU AI Act risk levels

Impact assessment

1-2 wAssess how your AI systems affect individuals and society (6.1.4). Document according to A.5.

Policy & processes

4-8 wDevelop AI policy, guidelines and governance documents

Statement of Applicability (SoA)

1-2 wDocument which Annex A controls you apply and justify inclusions and exclusions (6.1.3e)

Implementation

4-12 wDeploy controls, train staff and begin applying

Internal audit

1-2 wReview AIMS effectiveness and address findings

Why ISO 42001?

Because AI is already part of the business.

Stop improvising AI governance

Get a clear way to govern tools, data, ownership, and follow-up before AI use spreads further.

Answers that hold in customer and audit conversations

When customers or auditors ask how you govern AI, you can show the process instead of talking in general terms.

Reduced risk

Structured AI governance reduces the risk of incidents, data leaks, and bad decisions without stopping the tools that help.

A stronger base for future requirements

ISO 42001 gives you a practical structure for EU AI Act questions, customer demands, and future certification if you want to take that step later.

Read more about ISO 42001

What is ISO/IEC 42001?

A deep dive into the standard for AI management systems: what it requires, how it relates to other standards, and why it exists.

Read article →AmpliFlow in the ISO 42001 working group

How AmpliFlow contributes to the development of the standard and what it means for our customers.

Read article →You do not need to build AI to need governance

ISO 42001 becomes relevant as soon as AI affects how you work, communicate, or make decisions. For most SMBs, that starts with use, not development.

If you build your own AI, the demands often grow. But the more common starting point is simpler: get control of Copilot, ChatGPT, and other AI features already used across the business.

Questions about ISO 42001

Answers without AI jargon.

What is an AI Management System (AIMS)?

AIMS is a management system specifically for AI use. It defines how the organization governs, monitors and improves its AI use. Just like an ISMS for information security or QMS for quality.

Do we need ISO 42001 if we don't develop our own AI?

Yes, if you use AI tools like ChatGPT, Copilot or AI-based services. ISO 42001 is about responsible use of AI, not just development. All organizations where employees use AI tools benefit from the standard.

How does ISO 42001 relate to the EU AI Act?

The EU AI Act is legislation with requirements and sanctions. ISO 42001 is a voluntary standard that helps you meet legal requirements. Implementing ISO 42001 supports your compliance work, but ISO 42001 is not a harmonized standard under the AI Act and does not provide automatic presumption of conformity.

Can we use the same tools as for ISO 27001?

Yes. AmpliFlow has built-in controls for both ISO 27001 and ISO 42001 with AI assistance, automatic SoA, and task management per control. Beyond the control registers, both standards share the same tools: risk analysis, documentation via Pages, action management, and checklists.

How long does implementation take?

It depends on how many AI systems you have, how mature your current governance is and whether you already have other ISO certifications. The time goes toward embedding new ways of working, not writing documents.

Do we need to get certified?

No, certification is voluntary. You can implement ISO 42001 without external audit. But certification provides credible evidence for customers, partners and supervisory authorities.

Can AI models deliberately avoid controls?

Yes. Anthropic's research (December 2024) showed that AI models can exhibit so-called "alignment faking": they behave differently when they know they're being monitored. In 12 percent of cases, the model acted against its instructions when it believed it was unmonitored. That's why ISO 42001 requires ongoing monitoring, not just initial testing.

What is prompt injection and why does it matter?

Prompt injection means an attacker manipulates an AI system's instructions through hidden text in documents or web pages. The Microsoft 365 Copilot demonstration showed how an attacker could extract sensitive data by embedding hidden instructions in a shared document. ISO 42001 does not prescribe specific technical controls. Instead, it addresses this through risk assessment (6.1.2) that identifies prompt injection as a risk, risk treatment (6.1.3) that selects appropriate controls from Annex A, and requirements for operation and monitoring (A.6.2.6) of AI systems. The standard takes a risk-based approach rather than requiring specific technical solutions.

What do we do about shadow AI?

Shadow AI (when employees use AI tools without the organization's knowledge) is one of the most common risks. Step one: map actual AI usage (not just approved usage). Step two: create clear guidelines for which tools may be used and with what data. Step three: make it easier to use approved tools than to go around the system. ISO 42001 formalizes this through requirements for AI policy and awareness raising. We have written more about the phenomenon in the article <a href='/articles/shadow-ai-employees-building-without-it/'>Shadow AI: managers build tools IT doesn't know about</a>.

Ready for responsible AI?

Book a demo and we'll show you how AmpliFlow can help with AI governance. No sales pitch, just practical answers.