There’s one thing AI tools are genuinely good at in HSEQ work: sounding like they know what they’re talking about.

That’s also the problem.

A study published in March 2026 measured how often large

What the Study Measured

The researchers built a system called RIKER3. The core idea: start with known answers, generate documents from them, then ask questions - including questions about things that don’t exist in the document.

That last category is called “hallucination probes”4 in the study. Concretely: the AI receives a document, and the user asks about a person, a responsibility, or a field that isn’t mentioned anywhere in it. The correct answer is “there is nothing about this in the document.” The wrong answer is responding confidently with something invented. The study measures how often the wrong answer occurs.

This is a situation anyone working with quality, environmental, or safety management encounters constantly, though unintentionally: you ask about a response time that isn’t defined, a responsibility nobody has assigned, a requirement your procedure doesn’t actually cover. An

The Numbers

At shorter document sizes (32,000 tokens, roughly 50-60 pages), the best model fabricated answers in 1.19% of these test cases. Only 2 out of 35 tested models stayed below 5%. Most top-tier models landed at 5-7%.

These figures represent the best-case outcome per model, measured at whichever

1-2% might not sound like much. But it isn’t the same as an experienced colleague being wrong 2% of the time. That colleague hesitates when something feels off, asks a follow-up question, recognises when an answer seems implausible. An LLM makes no such judgement. If you ask it to summarise incident statistics and the answer should be 10 but the source material is ambiguous, it writes 0 with the same confidence it would write 10. No warning flag. No second thought.

Longer document sets make it worse. Much worse.

At 128,000 tokens (around 200 pages, roughly the length of Harry Potter and the Philosopher’s Stone), fabrication rates nearly tripled for most models. That

At 1,000,000 tokens5 (roughly all seven Harry Potter books combined, and the context window for the largest models from OpenAI and Anthropic as of March 2026) the models that support long context were tested. Not one stayed below 10% fabrication at 200,000 tokens. The best performer: 10.25%. The median sat around 25% at shorter context lengths and got worse from there. The study tested up to 200,000 tokens, but the trend does not point upward.

The Most Important Insight: Finding Facts and Inventing Facts Are Different Skills

This is the study’s most counterintuitive finding.

A model can be good at extracting information from documents while simultaneously being bad at avoiding inventing information that isn’t there. Llama 3.1 70B6 had 90% accuracy when answers actually existed in the document. The same model fabricated answers in nearly half of cases (49%) when the answer didn’t exist.

That’s not a sign the model is weak. It’s a consequence of how LLMs work: they generate the next most likely token given everything that came before. When the answer exists in the document, the most likely next token happens to match the actual answer - high accuracy. When the answer doesn’t exist, the same mechanism continues, now generating the typical answer to that type of question from training data - which for HSEQ documents can be a well-formulated, convincing, and entirely invented answer about response times, responsibility assignments, or requirement compliance.

“Understanding what a document says” and “recognising when an answer is absent” are two entirely separate capabilities.

What This Means in Practice for HSEQ Work

The three most common scenarios:

Scenario 1: You paste a policy and ask AI specific questions about it

If what you’re asking about is clearly described: works well. The problem arises when you ask about something that should be there but isn’t. The AI answers anyway, with something that sounds right but is invented.

Typical example: “What’s our response time for Class 2 deviations?” If that’s not defined in the document you’ve pasted, but similar structures exist, the AI constructs an answer based on what “should” be there.

Consequence: you think the procedure covers it. It doesn’t.

Scenario 2: You feed large volumes of documents for summarization or search

More text means worse performance. This is a direct result of the study. If you upload 150 pages of internal material and then ask what applies, the risk of fabricated answers increases markedly.

It’s no coincidence that broad questions - “what generally applies to X across our procedures?” - are the hardest. They require the AI to hold information together from many places. That’s exactly the task that collapses fastest at longer documents.

Scenario 3: You ask AI to check whether a document meets a requirement

“Does our risk assessment meet the requirements in ISO 45001 clause 6.1.2?”

The study supports an important conclusion here: if the requirement actually isn’t met, that is, if the answer “yes” has no backing in the document, the risk is high that the AI answers “yes” anyway. False reassurance is the most dangerous outcome.

What You Can Do

The researchers’ own conclusions, rewritten for HSEQ contexts:

Keep context short. Paste a specific section from your internal procedure and ask a specific question about it. The shorter the document, the lower the risk that the AI constructs answers that aren’t there. (ISO standards are protected by copyright7 and should not be copied into external AI tools - work with your own internal documents and interpretations of the requirements.)

Ask specifically, not generally. “Is there a defined responsibility for emergency planning in this document?” is better than “What applies to emergency planning?” The first has a binary answer. The second invites the AI to fill gaps.

Test the AI with traps. Ask about something you know isn’t in the document. If the AI answers with confidence, treat that as a warning sign for the entire session. It’s a simple calibration test and exactly the methodology the study is built on.

Always verify sources. If the AI points to “clause 4.3 specifies this,” go there and read it. The AI can be right about where something is and wrong about what it says. Or right about what it says and wrong that it exists at all.

Don’t switch models and assume the problem goes away. The study shows that model family8 is a better predictor of hallucination tendency than model size. But no vendor publishes comparable hallucination data you could use to make an informed purchasing decision. The study only tested

What Nobody Wants to Say Out Loud

AI tools are sold with promises of saving time and reducing errors. They can. But in document analysis, where the right answer depends on what’s actually written in a specific document, there’s an error category most people don’t account for.

It’s not that the AI is uncertain and signals it. An LLM has no sense of uncertainty to signal. It produces an answer that looks like every other answer, regardless of whether there’s any backing in the document.

The study tested 35 models in thousands of runs, 172 billion tokens processed in total. Even under the best conditions - short documents, best available model - the AI fabricated answers in nearly one in a hundred cases. At longer documents, which are more typical in real HSEQ work, the rate is considerably higher.

Use AI in your HSEQ work. There are good reasons to do so. But verify what’s verifiable, actively test model behavior, and never blindly trust an AI answer you can’t trace back to a source you’ve read.

AI Governance Starts with a Management System

This study is about one specific risk:

ISO 42001 provides a framework for governing AI use in your organisation - from risk assessment to policy and follow-up. The EU AI Act requires it, and applies not just to tech companies but to you as a deployer of AI systems.

If your organisation uses AI in HSEQ work, and most do at this point, the question isn’t whether you need a structured approach but when you formalise it.

Study referenced: “How Much Do LLMs Hallucinate in Document Q&A Scenarios? A 172-Billion-Token Study Across Temperatures, Context Lengths, and Hardware Platforms” (arXiv:2603.08274, March 2026)

Footnotes

-

LLM stands for Large Language Model. It is the technology behind tools like ChatGPT, Copilot, and Gemini. They are trained on vast amounts of text and learn to generate coherent language, but they do not “understand” text in the human sense. Important: The study tested open-weight models only, not GPT-4, Copilot, or Gemini. No equivalent published hallucination data exists for those closed models. The figures in this article apply to open-weight models; closed models may perform better or worse. ↩

-

A token is the smallest unit of text an LLM works with - roughly 0.75 words in English. A token is not the same as a word: a long word can be multiple tokens, a short word can be one. 32,000 tokens corresponds to roughly 50-60 pages of running text. “Next most likely token” is the technically correct formulation of what is sometimes simplified to “next word” in this article. ↩

-

RIKER (Retrieval Intelligence and Knowledge Extraction Rating) is an evaluation framework the researchers developed themselves. It is not an established industry-standard tool but a method for measuring hallucination without relying on other AI models as judges. The paper describes RIKER as a “paradigm inversion”: rather than extracting known facts from real documents, documents are generated from known facts, enabling deterministic scoring at arbitrary scale. ↩

-

Hallucination probes are questions where the correct answer is by definition “there is no information about this in the document.” Any other answer is a hallucination. This is not a matter of judgment - it is deterministically measurable. ↩

-

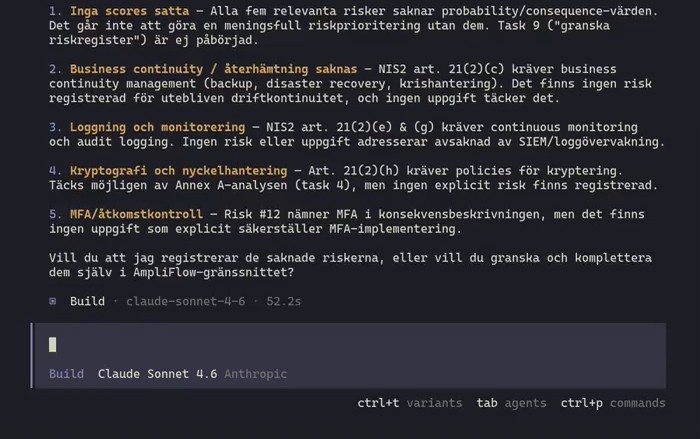

GPT-5.4 (OpenAI’s flagship model as of March 2026) has a context window of 1,000,000 tokens. Claude Opus/Sonnet 4.6 (Anthropic) has 1,000,000 tokens. Sources: platform.openai.com/docs/models, docs.anthropic.com/en/docs/about-claude/models/overview (retrieved March 2026). ↩

-

Llama 3.1 70B is an open AI model from Meta with 70 billion parameters. “70B” refers to the number of parameters, a measure of model size. The study tested 35 models from seven model families: Qwen (Alibaba), GLM (Tsinghua/Zhipu), Llama (Meta), DeepSeek, MiniMax, Granite (IBM), and Qwen3 MoE variants. ↩

-

ISO standards are protected by copyright and may not be reproduced without a license. ISO and national standards bodies such as BSI in the UK and ANSI in the US sell the standards and fund standardization work through these revenues. Pasting standard text into an AI tool - even for internal use - is a copyright question the organization should have a policy on. ↩

-

Model family refers to groups of models that share architecture and training methodology. The Llama family (Meta), GLM family (Tsinghua/Zhipu), and Qwen family (Alibaba) behave differently in the study, and family membership is a better predictor of hallucination tendency than model size alone. ↩