On 20 February 2026, the Swedish government published its AI strategy. The goal: Sweden should be among the top ten AI nations in the world.

That sounds ambitious. It is. But what does it mean for an ordinary company with 50 or 200 employees?

The short answer: more than most people think.

A Strategy for Society, Including Your Company

The strategy covers research, education, healthcare, defence, and business. Most of it targets government agencies and public bodies. But buried in the text are signals that apply to anyone using AI in their operations, and today that means most organizations.

Five things stand out.

1. AI Is Now Being Used as a Crime Tool. Against You.

The government states explicitly that AI is being used for organized crime, cyberattacks, and advanced financial fraud.1 This is not an abstract threat to government systems. It is the phishing email your finance manager opened last month. It is the deepfake voice message impersonating your CEO. It is the automated attack on your supply chain.

NCSC (Sweden’s National Cybersecurity Centre) notes that criminal actors are refining their methods and that it “becomes increasingly difficult for users to detect forgeries and fraud”.2 AI is what makes that refinement possible.

The fact that law enforcement is now getting an explicit mandate to understand and use AI is good. But it does not change the fact that you are already a target.

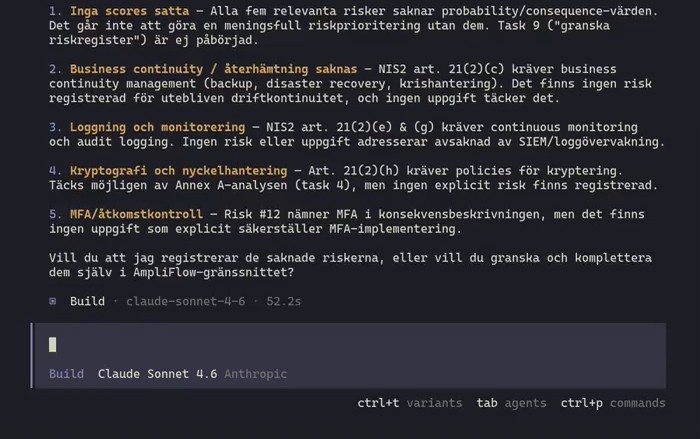

For your risk assessment, this adds a new category: AI-enhanced threats to your operations. ISO 42001 (the standard for AI management systems) addresses exactly this in clause 6.1.2. These are not future risks to plan for. They are happening now.

2. Standards Are Taking the Role of Regulation

This is the most underappreciated section of the entire strategy.

The government writes that standards can fill an important function as an alternative or complement to regulation.1 That is a clear political signal: when law has not caught up with technology, industry is expected to self-regulate through standards.

ISO 42001 is the standard for AI governance. Certifiable, internationally recognized, and a framework that aligns with EU AI Act requirements on risk management, documentation, and quality management.

Certification against ISO 42001 is not a legal requirement. But in an environment where authorities are actively encouraging standardisation as a path to compliance, and where customers and public procurement are starting to ask about AI governance, it is a step toward being prepared.

3. Employers Are Responsible for AI Competence

The strategy is clear: employers need to strengthen AI competence among their employees.1

This is not an invitation to optional webinars. It is a signal that employers are expected to take responsibility for how their staff uses AI: which tools, with what data, with what consequences.

This connects directly to ISO 42001 clause 7 on competence and awareness. Who in your organization is responsible for ensuring employees understand what they are actually doing when they paste customer data into ChatGPT?

4. The Public Sector Is About to Become Your Most Demanding Customer

The government’s ambition is for Sweden to be the best in the world at using AI in public administration. A national AI workshop for public administration is planned, with full deployment by 2030.1

This is already showing up in procurement requirements. DIGG’s guidance on procuring AI tools explicitly highlights ISO certifications as a factor to investigate with suppliers and asks procurement officers to determine “what the certifications guarantee, and what significance they have in the choice of supplier”.3

Public authorities, regions, and municipalities already know what to ask for. The question is whether you have an answer.

5. Disinformation Hits Companies, Not Just States

The strategy identifies AI-generated disinformation as a serious threat.1 The text focuses on national resilience and defence. But the same technology is being used against companies: fabricated press releases, fake customer reviews, deepfake videos of your CEO saying things they never said.

Most risk assessments have no box for that. They should.

What Do You Do With This?

The strategy is a political document. It creates no direct obligations for private companies. But it points clearly in one direction: AI governance is on its way to becoming a baseline requirement, not a bonus.

The practical starting point is the same wherever you are:

- Inventory which AI systems you use, including those embedded in existing tools.

- Do a simple risk assessment for each. What can go wrong? What is the consequence?

- Appoint someone who owns the question. “IT handles it” is not enough.

If you already have ISO 9001 or ISO 27001, the structure is familiar. ISO 42001 builds on the same foundation. Much of the work on context, leadership, and risk management is already done.

Read more about how the AI Act and ISO 42001 connect or get in touch if you want to know where your company stands.