A study published in Harvard Business Review in February 2026 found something unexpected. Researchers (Eatough, Ferrazzi, Smith, Waters) surveyed more than 3,000 employees across the United States and Europe, measuring what they call “AI angst” — anxiety about what AI means for one’s job, value, and future.

The finding: those with the highest anxiety use AI more than those with low anxiety. 65% of work AI-assisted versus 42%. Yet they simultaneously show twice the level of resistance.

The researchers describe the behaviour as “performative rather than participatory.” People use AI to protect themselves, not because they believe in it.

88% of organisations report using AI regularly. Eight in ten employees have strong anxiety about at least one aspect of what AI means for them. The numbers look like success. Beneath the surface, something else is happening.

What people are actually worried about

This is not about the technology. The study identifies what drives the anxiety:

- 65% worry about being replaced by someone who uses AI better

- 61% worry that colleagues no longer see their unique value

- 60% worry that using AI makes others question their competence

- 44% feel that AI is making them less capable

It is an identity question. And that question drives behaviour more than any training programme or AI strategy does.

The pattern is familiar. It resembles how organisations handled quality management systems twenty years ago: we have a folder, we are certified, and nobody opens the folder. Summarise this email. Rewrite this text. A glorified spell-checker. That hardly counts as the strategic advantage the management team dreamt of.

More training does not solve it

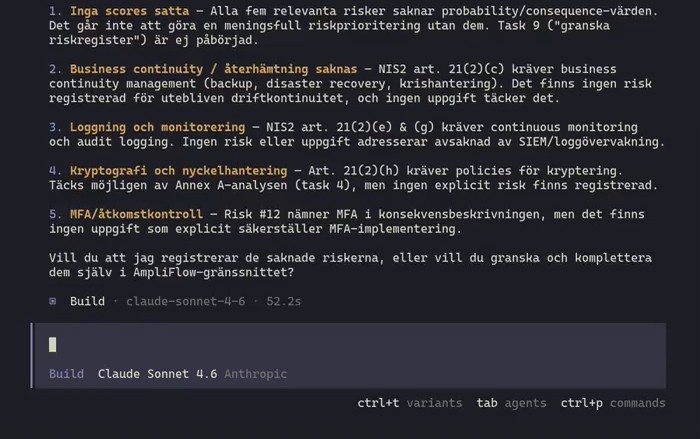

The study is clear that the usual responses do not work — more training and clearer mandates. The researchers write that leaders must stop treating usage as evidence of engagement.

The problem is that people use AI and resist it simultaneously. It is not that they refuse. It is that they do just enough not to stand out. It looks like adoption in the figures. In reality, it is theatre.

The HBR article points to three things that need to change. Understand what type of anxiety dominates in your industry. Stop measuring usage as though it were the same thing as engagement. And create environments where it is safe to experiment before you scale.

That last point is significant. The researchers write specifically about healthcare: “Without clear guardrails around safety, bias, accountability, and workflow integration, enthusiasm alone is not enough to sustain scalable, responsible adoption.” Governance is not a brake. It is what enables people to press the accelerator.

What ISO 42001 actually does about it

Most people who hear “ISO standard for AI” think technology and compliance. But ISO 42001 is — in my reading, at least — surprisingly focused on people.

The standard asks organisations to assess how AI systems affect individuals’ psychological wellbeing (B.5.2). The impact assessment must also document how AI affects employment and skills development (B.5.3).

The question “what happens to my job?” must be asked formally. It cannot stay in the break room.

The standard forces the conversation about AI and work that most organisations avoid.

Consider what it actually requires, mapped against what the researchers found:

“I do not know what is permitted” — Clause 5.2 requires a documented AI policy communicated to everyone in the organisation. Annex A.9.2 requires documented processes for responsible AI use. Nobody should have to guess.

“Who is accountable if something goes wrong?” — Clause 5.3 requires that roles and responsibilities are defined and communicated. A.3.2 makes this concrete for AI-specific roles. Annex C states it plainly: “Where previously persons would be held accountable for their actions, their actions can now be supported by or based on the use of an AI system.” Accountability when AI is involved is a new kind of problem.

“Will AI replace me?” — The impact assessment (6.1.4, A.5.4) requires that you assess the impact on individuals throughout the AI system’s lifecycle. B.5.3 requires documenting how AI affects employment and skills development. And B.9.3 lists human override of AI decisions as an objective: “authority to override decisions made by the AI system.” Humans over machines, formally stated.

“Can I say something is wrong?” — A.3.3 requires a process through which employees can report concerns about AI systems. The implementation guidance (B.3.3) specifies protection against retaliation and the option of anonymity. In effect, a whistleblower mechanism for AI.

“Is AI making me less capable?” — Clause 7.2 requires that you identify what competence is needed and ensure people actually have it. Annex C refers to the need for “dedicated specialists with interdisciplinary skill sets.” The standard thus argues that AI requires more competence, not less.

What makes the difference

Management must live the culture, not delegate it with a policy document. Clause 5.1 notes that top management should work on “establishing, encouraging and modelling a culture within the organization, to take a responsible approach to using, development and governing AI systems.” Sending out a policy and hoping for the best is not sufficient.

Do you already have a management system for ISO 9001 or ISO 27001? ISO 42001 follows the same Annex SL framework, so you build AI governance on top of what you already have.

Patrik Björklund and Joakim Stenström at AmpliFlow were part of the working group that developed ISO 42001. We are building AI governance support within the same system where you manage your existing ISO certifications. If you would like to see how it connects, book a conversation.

Further reading: introduction to ISO 42001 and the connection to the EU AI Act.